Optimise Performance of Your Moodle Site – thanks, Catalyst.

Performing Brain Surgery on the Beating Heart of Moodle was the topic of Cameron Ball, our colleague’s, presentation at the Moodle Moot Global 2022.

Curious?

The enticing name of the session was not simply a marketing twist! As Cameron took us through his team’s journey of developing innovative solutions to help streamline core processes of our client’s Moodle site, we saw how this work really was ‘the beating heart of Moodle’ – keeping the site ‘well and alive’.

Think of the basic tasks your Moodle site needs to perform at all times, like:

- Backing up courses

- Refreshing calendar events

- Converting files

- And any other ad hoc items that are not necessarily part of scheduled processes

Imagine the possible bottlenecks a long queue of tasks can create, as well as the potential blockages and the wasted server resources. Painful!

The Wow

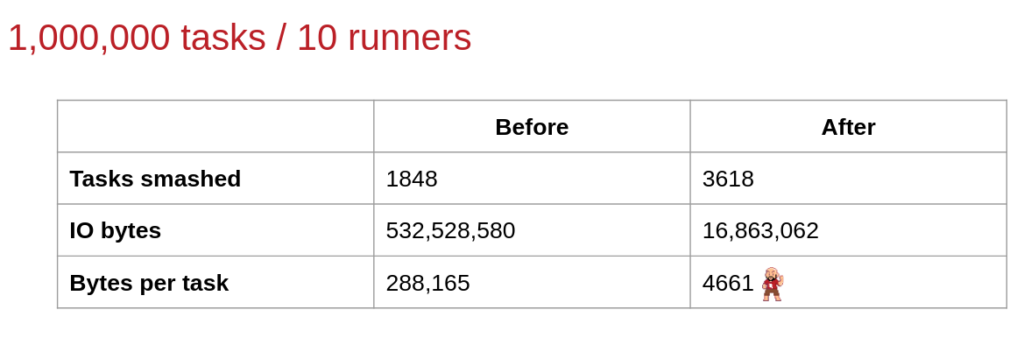

Through clever profiling and resource management, as well as “minicaching” and a bit of “neurosurgery” to Moode core code, here is what Cam and his team were able to achieve for one of Catalyst’s clients recently:

This achievement took creative thinking, six months of testing, numerous improvements and a lot of patience but the resulting zero regressions, zero blockages and the efficiencies achieved (as shown in the table above) were all worth it!

The How

- What is minicaching – how big should each cache be?

- What is the profiling and scheduling strategy / formula?

- And of course, what do you mean by “neurosurgery”?

are some of the questions you may have.

While the detailed explanation of our process is beyond the scope of this blog, here are some quick tips on each of the above points:

Minicaching –

A novel idea where each individual “task runner” maintains an internal cache of tasks to run in memory.

The cache of each runner may be identical in the beginning but over time, as each runner process tasks, it can infer information like:

- How long each task takes to run

- Which types of tasks are currently running

From this information, each runner “organically” knows what it should be doing, even though every runner is following the exact same “naive” algorithm.

The end result is that each runner seems to “just know” what it should do next to ensure the tasks get processed in a timely manner.

Profiling –

A big challenge was profiling the various algorithms.

The main bottleneck was database IO; and when you have multiple processes communicating with the database, how can you measure that?

The approach we settled on was using a packet sniffer to measure exactly how many bytes were transferred between the application and the database.

Scheduling –

The algorithm takes some inspiration from fair-share scheduling.

Imagine a list of tasks to run, and you have n servers available to run those tasks.

Fair-share scheduling says something like: “classify each of the n tasks into m discrete types” and ensure that each type of task occupies an equal number of servers (e.g., if there are 10 servers and 5 types of tasks, each task should be running (at most) on 2 servers – because 10/5 is 2).

However, this approach is naive; if we follow it blindly, all servers will be full, and there will be no remaining servers to run new types of tasks that may show up later.

Our approach was to leave at least one server available at all times, using a clever algorithm to try and infer which types of tasks finish quickly, and ensure it tries to always run / consume those quick tasks.

This way, any new kind of task entering the queue can always run.

The “Neurosurgery” –

This particular part of Moodle is absolutely crucial, without it critical functionality grinds to a halt.

Much in the same way that your brain takes care of many functions autonomously, the ad hoc task scheduling algorithm of Moodle ensures many “behind the scenes” functions happen seamlessly (think about “breathing” or “heart beating” functions of humans – it’s something that you don’t really think about, but they’re absolutely crucial).

Picking apart this layer of Moodle is a high-risk / high-reward scenario, and requires a great deal of patience, skill and attention to detail.

Guess, check, improve (Professional programming only, do not attempt!):

Obvious question about the minicaching idea is: how big should each minicache be?

The approach we settled on is a time-honoured approach used by the likes of Sir Isaac Newton and involves:

- Making a guess,

- Measuring how good that guess is,

- Adjusting the guess.

We have obtained rare footage of a developer implementing this highly elegant approach.

Please note, the following video is made available to you for educational purposes only, do not attempt to replicate. Instead, please get in touch with Catalyst IT team for all of your guess based development needs.

If you’re interested in finding out further detail about the process Cameron and his team used to achieve maximum efficiencies for their clients’ Moodle sites, please let us know by sending us a direct message on LinkedIn. If we generate enough expressions of interest, we will run a special virtual session where you can learn the specifics.

You can also check out the the Cron task manager page on Moodle for more details on latest improvements to this project.

Catalyst team are regular contributors to the Open Source Community and have developed a great number of improvements to Moodle and other Open Source products by contributing to both the core codes and the development of various plugins for all the community to benefit from.

“Catalyst has been involved in the Moodle project for twenty years, since its beginnings, and things are only just getting started,” said Andrew Boag, Managing Director – Catalyst IT Australia.

“Moodle is a big part of what has allowed Catalyst IT to become a multi-regional open source consultancy group we are today, with 380 staff globally.”

“Having celebrated the 20 year anniversary at the Moot Global in Barcelona last year, we are looking forward to the next decade of Moodle and Catalyst partnership with enthusiasm.”

Catalyst offer enterprise level software and IT services for over 600 organisations and provide 24/7 Follow The Sun support for our valued clients.